From Noise to Masterpiece: How AI Diffusion Models Create Images

If you've ever been amazed by an AI-generated image from services like DALL-E, Midjourney, or Stable Diffusion, you've witnessed the power of Diffusion Models. This groundbreaking AI technique has revolutionized digital art and image synthesis, but its core concept is surprisingly elegant: it creates complex images by starting with pure chaos.

While it may seem like magic, the process is a learned, step-by-step refinement, turning random static into a coherent and detailed picture based on a user's prompt.

Before Diffusion: A Quick Look Back

For years, the leading method for AI image generation was Generative Adversarial Networks (GANs). In a GAN, two neural networks—a "Generator" and a "Discriminator"—compete against each other. The Generator tries to create fake images, and the Discriminator tries to tell them apart from real ones. This competition drives them both to improve.

While powerful, GANs were notoriously difficult and unstable to train. They often struggled to generate a wide variety of images and could fail in unpredictable ways.

The New Paradigm: The Diffusion Process

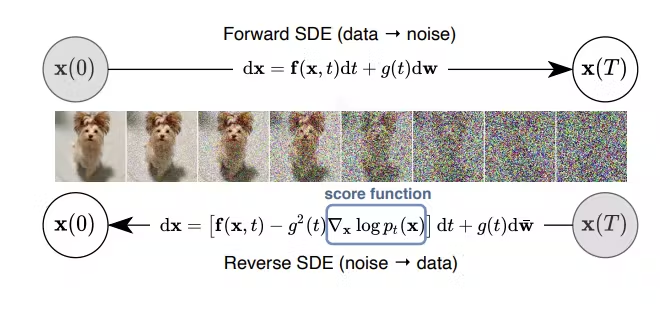

Diffusion Models take a completely different approach, inspired by concepts in thermodynamics. The process is broken down into two key phases:

1. The Forward Process: Adding Chaos

First, the AI learns what an image is by destroying it. It takes a perfectly clear image and systematically adds a small amount of "noise" (random static) over and over in a series of steps. It continues this until the original image is completely indistinguishable from random noise.

The model carefully tracks how the image degrades at each step. This "destruction" phase is the training ground—it's how the AI learns the path from order to chaos.

2. The Reverse Process: Finding Order

This is where the creation happens. The AI learns to reverse the process it just mastered. It starts with a new, completely random field of noise and, guided by a text prompt (e.g., "a photorealistic cat wearing a tiny hat"), it begins to slowly remove the noise, step-by-step.

At each step, it uses what it learned during the forward process to guess what a slightly less noisy version of the image should look like. The text prompt acts as a powerful guide, steering the denoising process toward the desired outcome. After hundreds or thousands of tiny refinement steps, a coherent, detailed image emerges from the static.

Why Diffusion Models Won the Creative Race

This method has several key advantages that have allowed it to surpass older techniques:

- Higher Quality and Diversity: Diffusion models are capable of producing stunningly photorealistic and artistically diverse images that were previously unattainable.

- Training Stability: They are far more stable and reliable to train compared to the delicate balancing act required for GANs.

- Unprecedented Control: The step-by-step nature of the process allows for incredible control via text prompts, enabling users to specify style, composition, and content with fine detail.

By mastering the art of reversing chaos, diffusion models represent a fundamental shift in generative AI, transforming simple text into complex visual art and opening a new frontier for digital creativity.