More Than Just Words: How AI's 'Attention' Unlocks Context

Have you ever read a long, complicated sentence and had to go back to the beginning to remember who or what it was talking about? Early AI models had the same problem, but on a massive scale. They had a kind of “goldfish memory,” struggling to keep track of context over long stretches of text.

The solution to this problem is a revolutionary concept called the Attention Mechanism. It’s the secret ingredient that allows modern AI to understand language with the nuance and depth that we often take for granted.

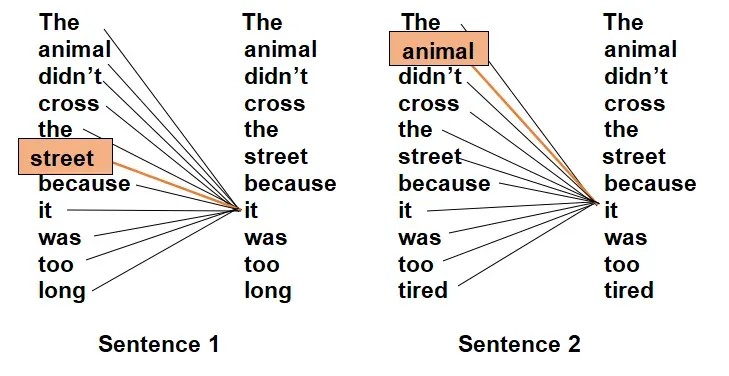

Attention is what allows an AI to “focus.” Instead of treating every word equally, it learns to give more weight and importance to specific words that are most relevant to the task at hand, just like you would use a highlighter in a textbook.

The Old Way: The One-Track Mind

Before the Attention Mechanism, the dominant models for processing language were Recurrent Neural Networks (RNNs). An RNN would read a sentence one word at a time, from left to right, trying to keep a running summary of what it had seen so far in its internal “memory.”

This sequential process had a major flaw. By the time the model reached the end of a long paragraph, the crucial information from the beginning would often be diluted or lost completely.

Consider this sentence: “The report on renewable energy trends, which was commissioned by the international committee and reviewed by three separate labs, finally showed it was a viable alternative.”

By the time a simple RNN got to the word “it,” the memory of “the report” would be so distant and faint that the model would struggle to make the connection.

The Breakthrough: Learning to Focus

The Attention Mechanism completely changed the game by allowing the model to look at the entire sentence at once. Instead of a one-way street, it creates a web of connections between all the words.

Here’s how it works on a conceptual level:

- Assign Importance Scores: As the model processes each word, it doesn’t just look at the word itself. It looks back at every other word in the sentence and asks, “How relevant is this word to the one I’m currently focused on?”

- Calculate a “Focus” Weight: It assigns a numerical score — an “attention weight” — to every other word. A higher score means more relevance.

- Create a Contextual Understanding: The model then uses these scores to create a new, weighted understanding of the word. The word is no longer seen in isolation but as a combination of itself plus the other words it’s “paying attention” to.

In our example sentence, when the model processes the word “it,” the Attention Mechanism would instantly assign a very high score to “the report” and low scores to less relevant words. This allows it to know, with near certainty, that “it” refers to the report.

Why Attention Was a Revolution

This ability to dynamically weigh the importance of words was a massive leap forward and the core innovation behind the powerful Transformer architecture that powers models like ChatGPT.

- Solves Long-Range Context: It completely eliminates the “goldfish memory” problem. A word at the end of a document can now easily connect to a word at the very beginning.

- Enables Parallel Processing: Unlike RNNs, which had to process words one by one, attention calculations can be done for all words simultaneously. This made it possible to train much larger and more powerful models in a fraction of the time.

- Improves Interpretability: You can actually visualize the attention scores to see what the model is “focusing on,” giving us a peek inside the AI’s “mind.”

The Attention Mechanism is what allows an AI to grasp the intricate web of relationships in language — understanding pronouns, resolving ambiguities, and producing text that is not just grammatically correct, but coherent and contextually aware.