Teaching AI to Behave: The Secret Sauce of Reinforcement Learning from Human Feedback (RLHF)

A raw Large Language Model, fresh from its initial training, is like a brilliant but unfiltered genius. It has read a vast portion of the internet and can generate fluent, grammatically perfect text on nearly any subject. However, it doesn't inherently understand human values. It doesn't know how to be helpful, polite, or safe.

So how do we transform this raw intelligence into the helpful, conversational assistants we use today? The answer lies in a powerful training process called Reinforcement Learning from Human Feedback (RLHF).

RLHF is the "finishing school" for AI. It's a method of fine-tuning a model not on more data, but on human preferences, teaching it the subtle art of being aligned with our goals and values.

The Problem: An AI That Knows Everything but Understands Nothing

A base LLM is trained to do one thing: predict the next word in a sequence. If trained on the whole internet, it might learn that a question is often followed by an answer. But it also might have learned that a question is followed by a snarky comment, a dangerous instruction, or complete nonsense. It has no built-in "moral compass" or concept of helpfulness.

Without fine-tuning, asking an AI for help could result in an answer that is:

- Unhelpful: Technically correct but not what the user actually needed.

- Unsafe: Providing instructions for harmful activities.

- Biased or Toxic: Reflecting the worst parts of its internet training data.

- Socially Unaware: Lacking the tone and nuance of a good conversation.

The Solution: A Three-Step Training Regimen

RLHF corrects these issues by explicitly teaching the AI what humans consider a "good" response. The process involves three main stages:

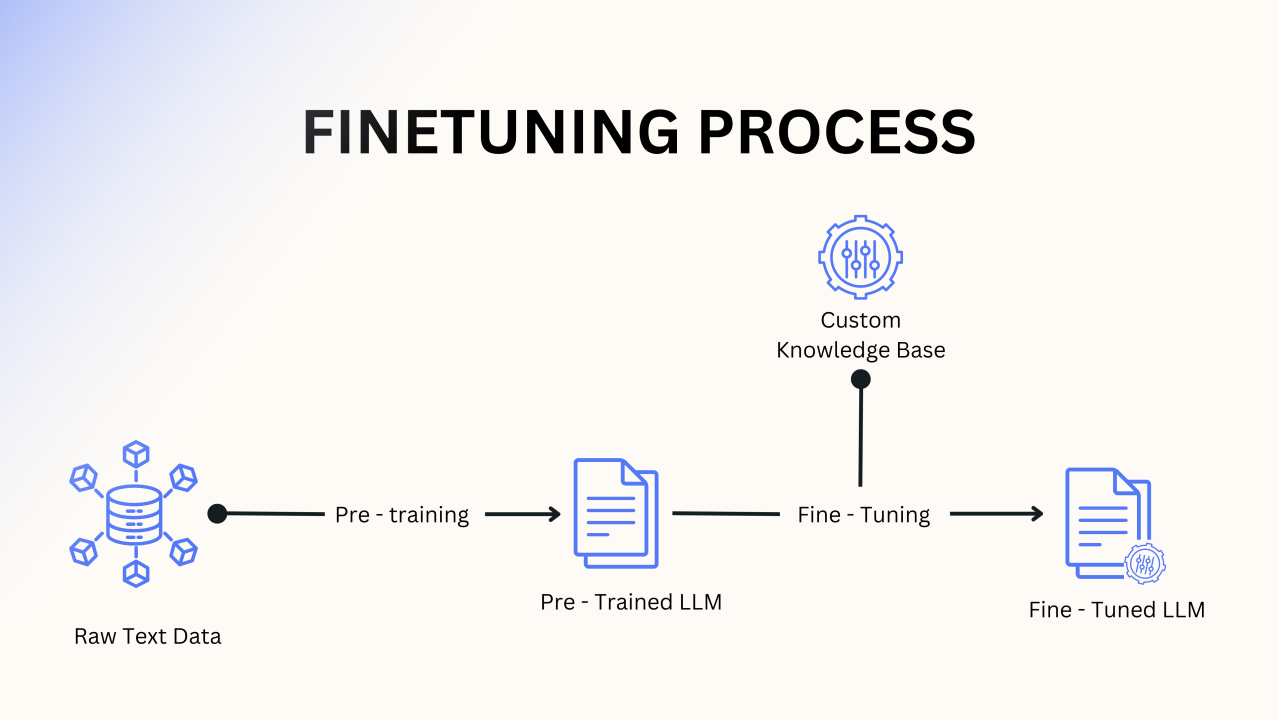

1. Supervised Fine-Tuning (The Initial Lessons)

First, a team of human labelers is hired to create a high-quality, curated dataset. They write out conversations where they act as both the user and an ideal AI assistant, demonstrating exactly how the AI should respond to various prompts. The base LLM is trained on these "perfect" examples to give it an initial understanding of helpful dialogue.

2. Training a "Reward Model" (Learning to Judge)

This is the heart of RLHF. You can't have humans guiding the AI forever—it's too slow. Instead, you teach a second, separate AI to act as a judge.

To do this, the LLM is given a single prompt and generates several different possible answers (e.g., A, B, C, and D). Human labelers then rank these responses from best to worst. This process is repeated thousands of times. This ranking data is used to train a "Reward Model," an AI whose only job is to look at a response and give it a score based on how much a human would likely prefer it.

3. Reinforcement Learning (Practice and Polish)

Now, the original LLM is let loose in a controlled environment. It gets a prompt and generates a response. That response is immediately shown to the Reward Model, which gives it a score.

This score acts as a "reward." The LLM's goal is to maximize its reward. If it gets a high score, its internal settings are adjusted to make it more likely to give similar answers in the future. If it gets a low score, it adjusts its settings to avoid that kind of response. Through millions of cycles of this automated trial-and-error, the LLM polishes its behavior, learning to consistently generate answers that the Reward Model—and by extension, humans—will find helpful, honest, and harmless.

Why RLHF is a Breakthrough

RLHF is one of the most important innovations that made models like ChatGPT and Claude possible. It's the critical bridge between pure technical capability and genuine usability. By embedding human preferences directly into the training process, it aligns the AI's behavior with our own, creating a tool that is not only incredibly powerful but also safe and genuinely useful for everyone.