The AI's Hidden Opinions: What is AI Bias?

You ask an AI image generator to create a picture of a "successful CEO," and it only shows you men in suits. You use a new hiring tool to screen résumés, and it seems to favor applicants from certain neighborhoods over others.

These aren't just weird coincidences; they are examples of AI Bias. This is one of the most serious challenges in the world of artificial intelligence, where an AI can make unfair or prejudiced decisions.

Think of an AI as a student who learns everything they know from a specific pile of books. If that pile of books is filled with outdated ideas or only shows one point of view, the student's knowledge will be skewed. The AI is the same way.

What is AI Bias?

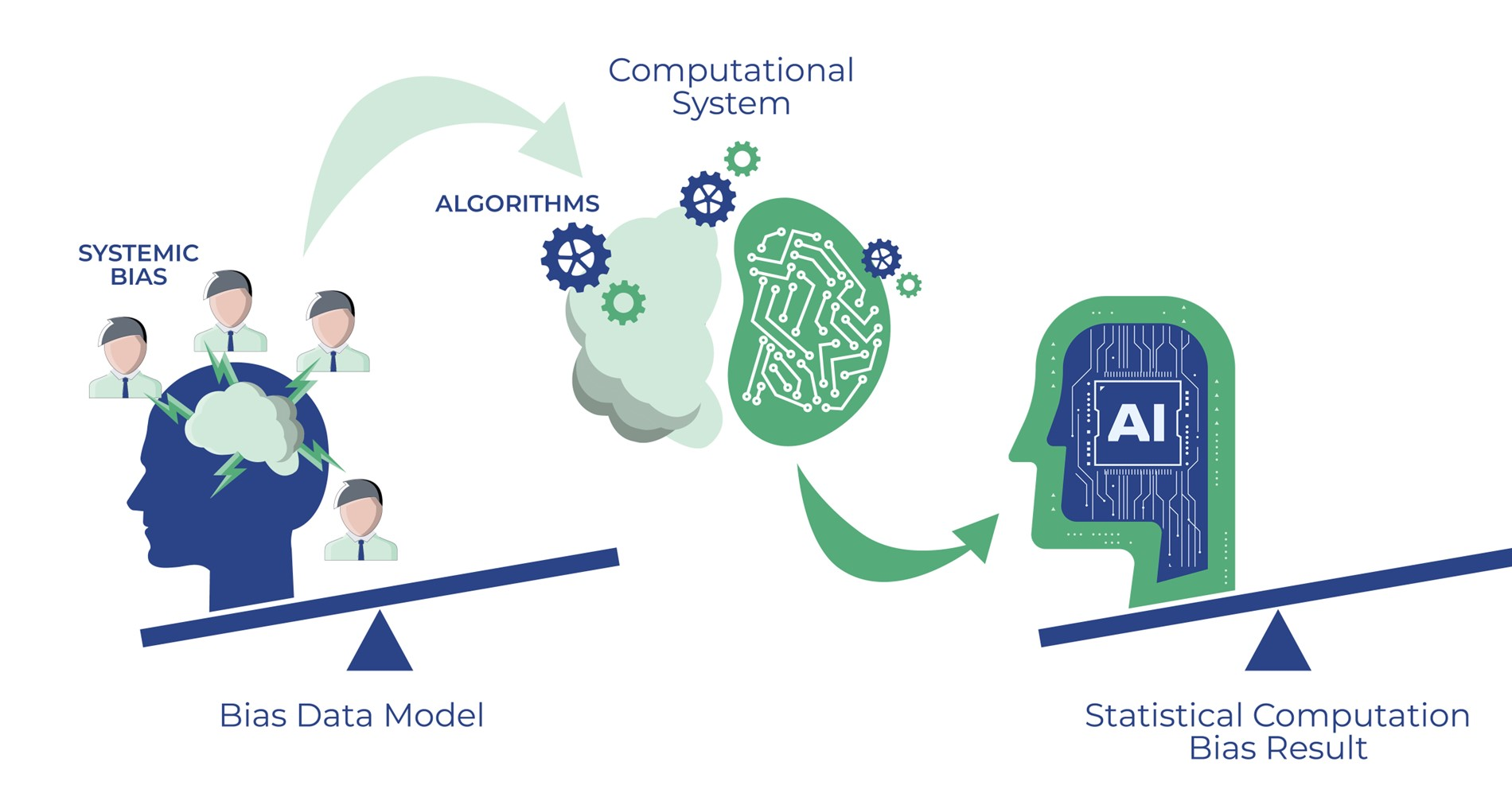

AI Bias is when an AI system produces results that are consistently prejudiced against certain groups of people. It's not that the AI "thinks" in a biased way; it's a reflection of the data it was trained on.

The AI doesn't have its own opinions, but it can echo the hidden opinions and unfair patterns found in its training data.

Common examples include:

- Image Generators: Creating stereotypical images when given neutral prompts (e.g., all nurses are women, all construction workers are men).

- Hiring Tools: An AI trained on a company's past hiring data might learn to unfairly penalize female applicants if the company historically hired more men.

- Loan Approvals: A system might deny loans to people from specific zip codes because its data shows that historically, fewer loans were approved there.

Where Does Bias Come From?

An AI isn't born with bias. It learns it. Here are the main ways it happens:

1. Biased Data

This is the biggest cause. The AI learns from information created by humans, and human society has existing biases. If an AI is trained on historical data from a world with racial or gender inequality, it will learn those same unfair patterns. The rule is simple: garbage in, garbage out.

2. Not Enough Data

Imagine you're teaching an AI to recognize dogs, but you only show it pictures of golden retrievers. Afterward, when you show it a picture of a chihuahua, it might not recognize it as a dog. The same thing happens with people. If the AI's training data mostly features one demographic, its performance will be worse for everyone else.

3. Human Error

The people who collect and label the data for AI can accidentally introduce their own unconscious biases into the system, influencing what the AI ultimately learns.

Why Does This Matter?

AI bias isn't just a technical glitch; it has real-world consequences. It can lead to people being unfairly denied jobs, loans, or even medical care. As we use AI in more important areas of our lives, ensuring it is fair and equitable becomes critical.

What is being done? Researchers and developers are working hard to solve this problem by:

- Carefully collecting more diverse and representative data.

- Building tools to test AI systems for unfair biases before they are released.

- Designing AI to be more transparent about how it makes its decisions.

As a user, just knowing that AI bias exists makes you a smarter consumer of technology. It helps you question the results you see and understand that while AI is a powerful tool, it is a reflection of the world it learned from—flaws and all.