The Open-Book Exam for AI: Understanding Retrieval-Augmented Generation (RAG)

Large Language Models (LLMs) like ChatGPT are incredibly knowledgeable, but they have a fundamental flaw: their memory is frozen in time. They only know what they were taught during their training, and they can't learn new information on their own. This leads to outdated answers and, sometimes, confident-sounding fabrications known as "hallucinations."

A powerful technique called Retrieval-Augmented Generation (RAG) is fixing this. It's a clever approach that gives an LLM access to real-time, external information before it answers your question.

Think of RAG as giving an AI an open-book exam. Instead of relying solely on its memory, it can first look up the relevant facts from a trusted source, ensuring its answers are accurate, current, and verifiable.

The Problem: The Brilliant but Isolated Brain

Without RAG, an LLM is like a brilliant student who has memorized every textbook up to last year but has been locked in a library ever since. If you ask about yesterday's news or your company's latest internal policy, it can only make an educated guess. This has two major limitations:

- Knowledge Cutoff: The AI has no awareness of events, data, or documents created after its training was completed.

- Lack of Specificity: It doesn't know about private or specialized information, like your company’s internal HR documents or the technical specs of a product you just launched.

The Solution: A Two-Step Process

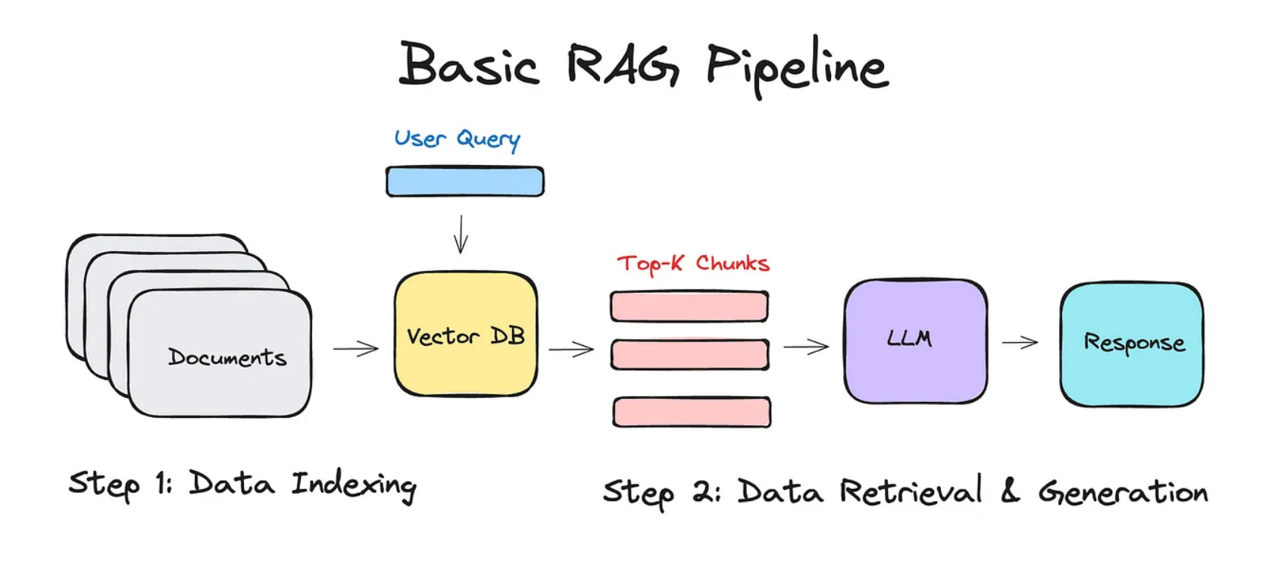

RAG transforms the AI from an isolated brain into a real-time researcher. The process is simple but incredibly effective:

1. Retrieval (The "Look-up" Step)

When you ask a question, the RAG system doesn't immediately send it to the LLM. First, it uses a smart search component—the Retriever—to scan a specific, up-to-date knowledge base. This could be the entire internet, a set of legal documents, your company's internal wiki, or a product manual. The Retriever finds the snippets of text that are most relevant to your query.

2. Augmented Generation (The "Answer" Step)

Next, the system "augments" your original question by bundling it with the relevant information it just found. It essentially creates a new, much more detailed prompt that says: "Based on these specific facts [insert retrieved text here], answer this question: [insert original question here]."

The LLM then generates an answer using this provided context. Because it now has the correct, up-to-date information right in front of it, its answer is far more likely to be accurate and relevant.

Why RAG is a Game-Changer

This approach has quickly become one of the most important advancements in applied AI because it offers several key benefits:

- Dramatically Reduces Hallucinations: By grounding the AI in factual documents, RAG prevents it from making things up.

- Provides Real-Time Knowledge: It allows AI applications to provide answers based on the most current information available.

- Enables Citations and Trust: Since the AI uses specific sources, it can cite where it got its information, allowing users to verify its claims.

- Allows for Customization: Companies can connect RAG systems to their own private data, creating expert chatbots that can answer specific questions about their internal operations, products, or policies.

In essence, RAG makes AI not just smarter, but more reliable, trustworthy, and useful in the real world. It’s a bridge between the vast, static knowledge of an LLM and the dynamic, specific information we need every day.