Why Does AI Make Things Up? Understanding 'Hallucinations'

If you've ever used an AI chatbot, you might have experienced something strange. You ask it a question, and it gives you a beautifully written, confident-sounding answer that is completely wrong. It might invent a historical event, cite a fake book, or make up a biography for a real person.

This phenomenon is so common that it has a special name: an AI Hallucination.

Think of an AI as an incredibly enthusiastic intern who has read every book in the library but doesn't actually know what's true. Their main goal is to give you a helpful and well-structured answer, even if they have to invent the details to do it.

What is an AI Hallucination?

An AI hallucination is when an AI model presents false, nonsensical, or made-up information as if it were a proven fact.

It’s important to know that the AI is not "lying." Lying implies that it knows the truth and is intentionally trying to deceive you. The AI doesn't know the difference between fact and fiction; it only knows how to arrange words in a way that sounds correct based on the patterns it learned.

Common examples include:

- Making up facts: "The first elephant to walk on the moon was named Bartholomew in 1978."

- Citing fake sources: Providing a link to a news article or scientific study that doesn't exist.

- Inventing details: Describing features of a product that it doesn't actually have.

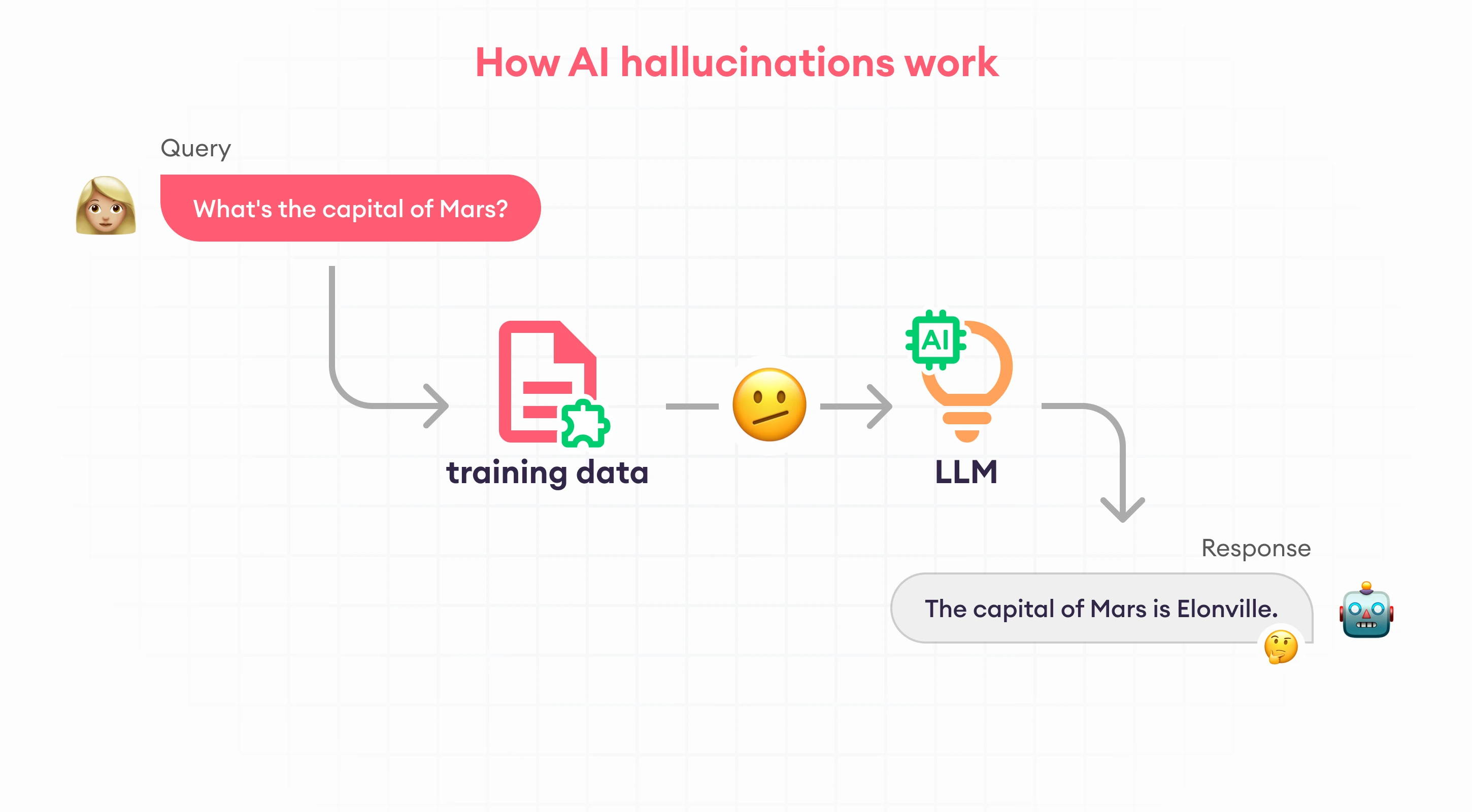

Why Does This Happen?

To understand hallucinations, you need to know the AI’s one and only goal: to predict the next most likely word. It is essentially a super-powered autocomplete, like the one on your phone, but far more advanced.

Here are the main reasons it gets things wrong:

1. It's a Pattern-Matcher, Not a Fact-Checker

The AI was trained by reading trillions of words from the internet. It learned the patterns of how words fit together to form sentences. When you ask it a question, it doesn't search a database of facts. Instead, it starts generating words that are statistically likely to follow your question, creating a plausible-sounding answer. If a plausible answer requires a made-up fact, the AI will create one without hesitation.

2. Gaps in Its Knowledge

An AI’s knowledge is limited to the data it was trained on. It doesn't know about recent events, and it might have very little information on niche or obscure topics. When you ask about something it doesn't know, it will try its best to fill in the blanks rather than just saying "I don't know."

3. Confusing Questions

Sometimes, a poorly worded or tricky question can lead the AI down a fictional path. If your question contains a false assumption, the AI might just go along with it and build a fantasy world around your premise.

How to Handle Hallucinations

AI is an amazing tool, but it's not a perfect oracle. It's more like a creative partner than a calculator. Here’s how you can use it wisely:

- Always Double-Check Important Information: If an AI gives you a fact, a date, a legal opinion, or a statistic, take a few seconds to verify it with a quick search on a reliable website.

- Ask for Sources: A good habit is to ask the AI, "Where did you get that information?" Be aware that it can sometimes hallucinate sources, too!

- Use it for Creativity, Not Facts: AI is fantastic for brainstorming ideas, writing drafts, and summarizing text. It's less reliable as a source of hard facts.

Hallucinations are a known bug in today's AI technology. By understanding why they happen, you can use these powerful tools effectively while avoiding the pitfalls of their over-confident mistakes.