Ebirengyirireku Amagambo: Enshonga ya 'Attention' mu AI eyetegereza Context

Waba wegarukaho okusoma sentence enene kandi ekuzibu, okagarukayo aha ntandikwa kugyenda kwibuka ekintu kyahandiikibwe? AI models za mbere zaarimu obuzibu obw’eizo — obw’okuba n’enkumbuzi nki ya “goldfish”, obutashobora kwibuka context mu bintu ebirimu amagambo mangi.

Eki kyakabuziremu solution ey’eky’amaani enshonga eyitwa Attention Mechanism. N’ekyama ekikuru ekiha AI ya murikiisi obushobozi bwo’kumanya ebigambo n’omurundi ogu mugyendere, ogu tuba tutambuura nk’obusobozi busanzwe.

Attention n’ekintu ekiha AI “okuhanga.” Tibiteeka amagambo ga buli kimu ku rugero rumwe, ahu eyiga okuha amagambo ag’omugaso ebiro byo by’omugaso kuruga mu mugaso oguli mu task eya rukurikirane—nki ngu we akozesa highlighter mu bitabo.

Enkora ya Kijwire: Ekiro ky’Ekicweka Kimu

Mbwenu, mbere ya Attention Mechanism, models ezakoresibwanga mu kugyendereza ebigambo zaarimu Recurrent Neural Networks (RNNs). RNN yasomanga sentence ekigambo kimwe ahu kimu, kuva kuruguru okushasha, ng’ekwenda kuteekateeka summary y’ebyo byona ebyari byayisomeire mu “memory” yayo ey’omunda.

Eky’okukora ky’okuruguruka kyari n’obutabanguko. Obu model yaaba eishohoze aha nkomerero y’ekigambo kinene, eby’okutanga byaribwaho byatere kurugaho, oba byazimiriraho rw’egyendere.

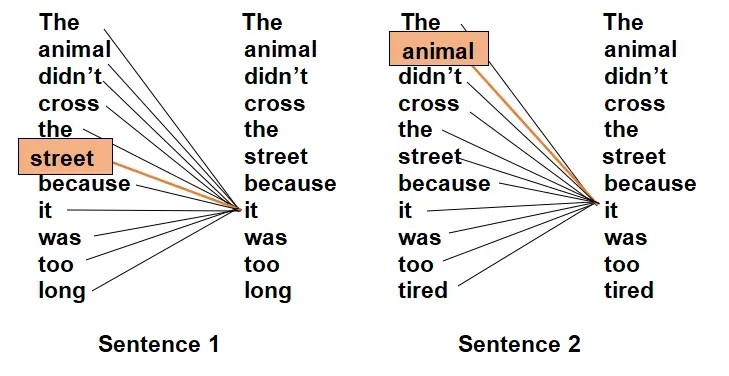

Tekereza aha sentence eno:

“The report on renewable energy trends, which was commissioned by the international committee and reviewed by three separate labs, finally showed it was a viable alternative.”

Obu RNN ya kashagama okutuuka aha “it,” ekiyetaagisa “report” kyari kure muno mu memory, ekikashoba kurikwataho obwingi.

Ekikuru ky’Okufuba: Kwiga Okuhanga

Attention Mechanism yagyenda kuhindura ekibuga, kuhikaho obushobozi bwa model bwo kureeba sentence yonna omu bwangu. Tibyaba nka “one-way street”, naye bikora nki webu y’okuhuriza amagambo gayo gyonka.

N’okwo bikwenda kutamba n’omurundi ogu:

- Okuteekaho Importance Scores: Obu model erya kugyendereza ekigambo kimu, terikiteekaho kimu kyokka. Kireeba buli kigambo mu sentence kyonka, kibuza, “Eki kigambo kinyanguha ki aha kye ndi kuhanga?”

- Okubariramu “Focus” Weight: Kituha score ey’okubara—“attention weight”—aha buli kigambo kyindi. Obu score eri hejuru, ekirorere nikyo ky’omugaso muno.

- Okushaba Contextual Understanding: Model kyarukozesa ebisubizo byo kugyenda kureeba amagambo mu “weighted” form. Ekigambo kiba tekirikureebwamu kyokka, ahu nki ekirukwata hamwe n’amagambo gindi gikirukwatira.

Mu sentence y’eitu, obu model erya kugyendereza “it,” Attention Mechanism rya kugaruka kikuhe score ey’omugaso muno aha “the report” kandi ehansi aha amagambo atari g’omugaso. Eki nikyo kirimu kuha AI okumanya, kwa nkayo, nti “it” nikirukwatira “report.”

Lwaki Attention Yari Omuhinduka Omukuru

Obushobozi bwo’kusiga obugyendere obw’amagambo niyo ya kaburira omu mutegyere gwa Transformer architecture—ekyaha models nka ChatGPT amaani n’obukora obw’omugaso.

- Eky’okukiza Long-Range Context: Kyakurukaho “goldfish memory” problem. Ekigambo aha nkomerero y’ekyapa ky’omukanda kyashobora kwihuriza aha kigambo ky’okutanga.

- Eky’okwihuta mu Parallel Processing: Tibiri nka RNNs, ebyarukora ekigambo kimu ahu kimu. Attention ishobora kukora ebibaririro by’amagambo gyonka omu bwangu. Eki kyakafuga okuteeka models enene n’amani mungi mu bwiire butono.

- Eky’okuhindura Interpretability: Wangi, osobora kureeba attention scores n’okubona ebigambo AI erya kuhanga, ekikuha obusobyo bw’okureeba “omu bwongo bwa AI.”

Attention Mechanism n’ekirukuhika AI okumanya enkora y’okuhuriza amagambo mu rurimi — okushoborora pronouns, okurondora ebizibu, n’okuteekaho ebigambo ebirimu obugyendere n’okukwatana oburungi, si grammar kyokka.